November 22, 2019 09:33 AM

In 2018, a meta-analysis of the Partners for Change Outcome Management System (PCOMS) was published in a prestigious research journal. Contradicting a large body of randomized clinical trials, the study essentially trashed PCOMS, claiming its effects were minimal and largely a result of the use of the Outcome Rating Scale (ORS) as both an outcome measure and clinical tool. An examination of the included studies revealed flaws so egregious that their inclusion was almost comical.

Missing: Adequate Dose and Adherence

For example, the meta-analysis included studies in which the intervention was too brief to realize a feedback effect (four studies with less that 4 sessions, two studies with approximately 2 sessions)—studies finding a feedback effect averaged over 4 sessions. You have to allow time for the feedback effect to happen.

Also included were seven studies with low, intermittent, or even absent adherence to the PCOMS protocol of administering and discussing the measures in each session. Seriously, could you expect to find a feedback effect when the measures “were used half of the time at undetermined levels?” Studies with reasonable adherence have found a feedback effect.

In total, nine of the selected studies demonstrated an inadequate dose of the intervention and/or significant adherence problems. A meta-analysis holds up only to the extent that the studies analyzed are methodologically sound and, thus, support the validity of conclusions.

Finally, the meta-analysis failed to mention the many contradictions to their statements regarding the use of just the ORS. Unbelievably, nine studies included in the analysis demonstrated a feedback effect on measures other the ORS. Yet, the authors do not mention them.

Responding to the Research Bias

When we read the aforementioned study, my friend and collaborator for many years, Dr. Jacqueline Sparks, knew we had to write a response. Here is the abstract:

Consumers of psychotherapy outcome literature consider meta-analysis the gold standard for assessing the efficacy of interventions across disparate studies. Many assume that findings are valid, especially when published in journals with research credentials. Uncritical acceptance, however, can result in real-world consequences, including whether interventions attain evidence-based status or become marginalized or are considered for implementation in public service arenas.

This article examines one meta-analysis, “The Effect of Using the Partners for Change Outcome Management System as Feedback Tool in Psychotherapy—A Systematic Review and Meta-Analysis” (Østergård, Randa, & Hougaard, 2018). The findings are at odds with both the empirical record of routine outcome management as well as professional task force recommendations and thus provide an ideal exemplar of the risks of uncritically accepting the conclusions of a meta-analysis.

Using guidelines from the Cochrane Handbook for Systematic Reviews of Interventions (Higgins & Green, 2011) and a qualitative case study methodology, this article examines Østergård et al.’s (2018) study selection, quality of evidence, and appropriateness of interpretation, emphasizing the link between flawed method and the ultimate validity of its conclusions. The method illustrated in this case study can be used to assess the legitimacy of meta-analytic findings to inform practice, funding, and policy decisions as well as how rhetoric minimizes flaws and bolsters believability.

Our analysis revealed that half of the selected studies of the meta-analysis contained significant limitations, including inadequate dose of treatment and/or adherence problems, thereby calling into question its conclusions.

Check out the article here. Or email me at barryduncan@betteroutcomesnow.com for a copy.

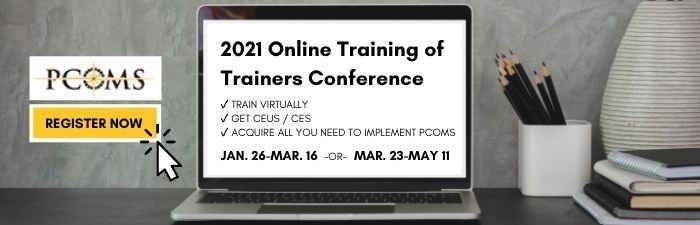

And don’t forget to register for the upcoming Training of Trainers Conference, where you'll not only learn how to properly implement PCOMS, but also how to train others to do the same. In addition, you'll gain an in-depth understanding of the rationale for - and respected randomized clinical trials supporting - the use of PCOMS.

.png)

.png)