July 30, 2021 03:15 PM

Meta-analysis is meant to provide a statistically accurate assessment of an intervention’s efficacy not possible with a single study, aiming to synthesize disparate data to facilitate informed, practical decisions about its use. But it can also miss the boat. My friend and colleague, Dr. Jacqueline Sparks and I (Duncan & Sparks, 2020) exposed the flaws and misleading conclusions of one such meta-analysis (Østergård et al., 2018).

Although the study was chocked full of misinformation, the bottom line is that it reached grossly false conclusions based on six studies that should not have been included in the analysis in the first place. We effectively eviscerated the shoddy scholarship of that meta-analysis and highlighted that when there are a limited number of studies available, the conclusions can be greatly influenced by a few bad apples.

The Same Six Studies

Why am I bringing this up? Because since that study, two more meta-analyses have been conducted of the Partners for Change Outcome Management System (PCOMS; (DeJong et al., 2021; Pejterson et al., 2020), and guess what? They included the same lame studies.

There have been 15 randomized clinical trials (RCT) of PCOMS; 9 have found a significant feedback effect while 6 have not. The impact of the six not finding an effect, when aggregated with the nine that did, has led some to conclude that PCOMS only minimally improves outcomes. Of the six studies that found no benefit for feedback, one was conducted in an inpatient setting with adolescents (Lester, 2012), three focused on outpatient therapy (Kelleybrew-Miller, 2014; Murphy et al., 2012; Rise et al., 2012), one addressed group therapy for adults with an eating disorder (Davidsen et al., 2017), and another was in emergency psychiatry setting with adults (van Oenen et al., 2016). Given that three of the six were with more severely distressed populations, some have posited this as a potential limit of PCOMS.

Over-Attributed Significance to Questionable Conclusions

Given the limited number of studies that have been conducted, an over-attribution of significance to questionable conclusions has emerged from studies of dubious methodology. Returning to those six studies: Four did not meet a minimal threshold for adequate treatment for a feedback effect, i.e., at least 4 sessions (Murphy et al., 2012; Rise et al., 2012); two, Kellybrew-Miller (2014) and Lester (2012), averaged but 2.2 and 1.7 sessions respectively! Similarly, five of the six RCTs not finding an effect contained significant adherence problems, not following the documented PCOMS protocol, ranging from the results not being discussed with clients (Davidsen et al., 2017) or not using the SRS (Murphy et al., 2012) to PCOMS being used about two-thirds of the time (Kellybrew-Miller, 2014; van Oenen et al., 2016) and/or substantial negative therapist perceptions of PCOMS usefulness (Davidsen et al., 2017; Lester, 2012).

Greater Than 4 Sessions Plus Adherence Equals a Feedback Effect

When studies average 4 sessions or more and when adherence to the PCOMS protocol is reasonable, there are significant feedback effects as the other 9 studies have demonstrated. A meta-analysis holds up only to the extent that the studies analyzed are methodologically sound and, thus, support the validity of conclusions. The initial misstep of including these 6 studies generates a chain of necessarily flawed interpretations that overreach the data upon which they are based. And is amplified to almost “fact” status through repetition.

When there are so few studies available, researchers should examine each study for obvious problems and consider whether they should be included. The lack of this scholarly scrutiny leads to the classic criticism of meta-analysis, apropos here:

Garbage in, garbage out.

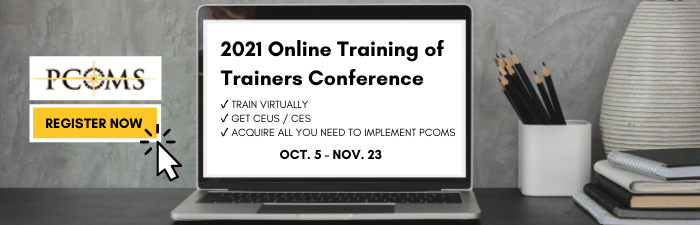

Register for our Online Trainers Conference Today

.png)

.png)